| Sprint 4 & 5 | Girls in CS Panel | 2024 FRQ #2 | Final Exam Review | Final MC and FRQ |

Final Retrospective - Tri 2

An Overview of My Second Tri Learnings

- Personal Analytics

- Instructional Framework Development

- 1. Groups Management System (Frontend + Spring Integration)

- 2. Baseline Smart Group Formation (Pre-Feedback)

- 3. Adding Feedback-Based Adaptation

- 4. Backend Update: Feedback → Score Adjustment

- 5. Endpoint Response After Update

- 6. Technical Challenges: Feedback Validation

- 7. Current System Capabilities

- 8. Summary

- Night at the Museum — User Testing Feedback (Glows & Grows)

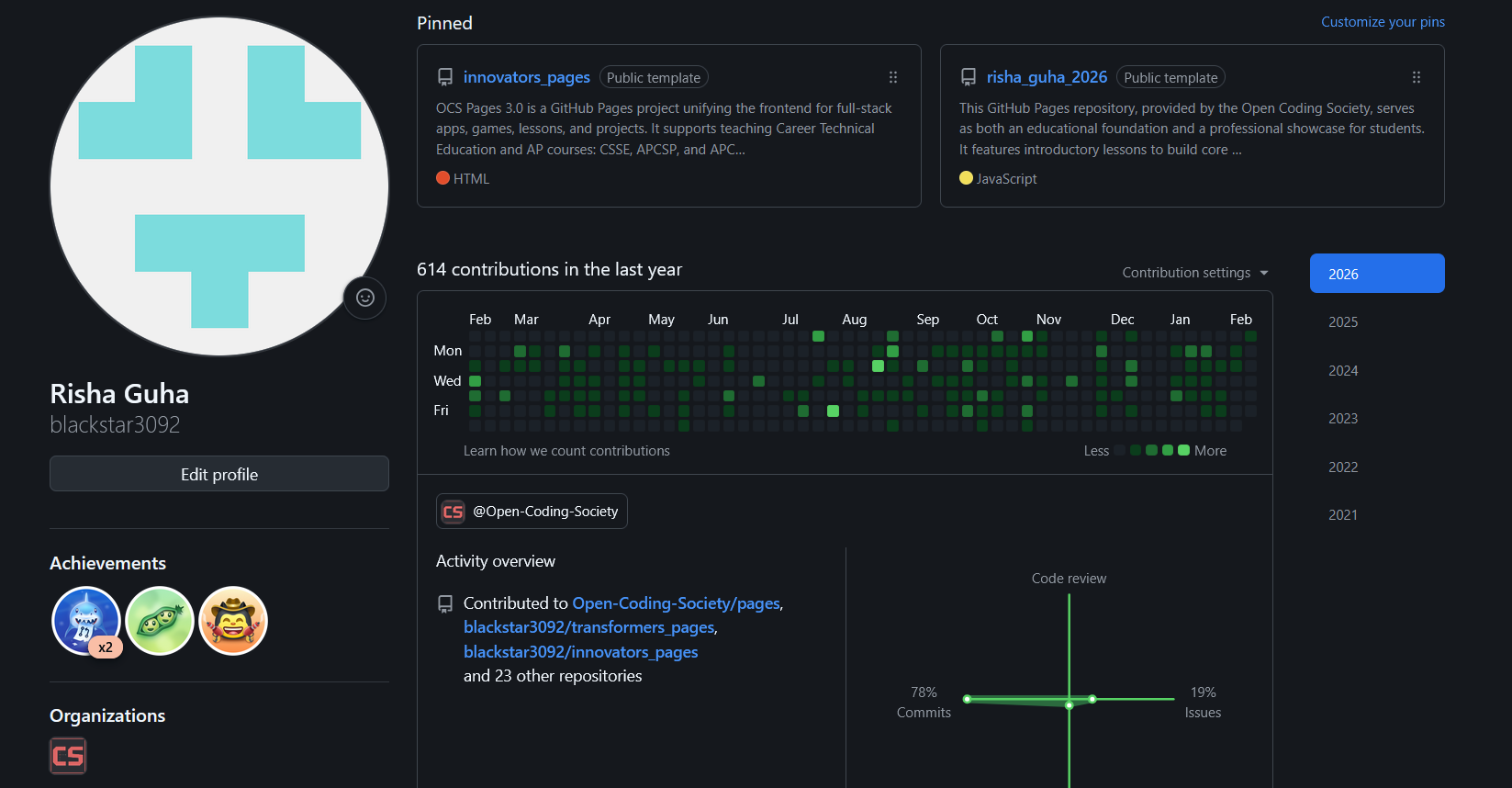

Personal Analytics

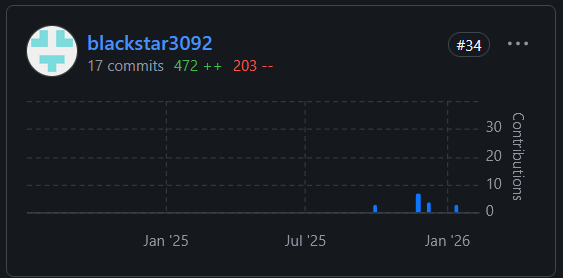

Issues

I created many across planning, debugging, feature tracking, and instructional design. This helped me improve the following skills:

- Proactive organization before coding

- Clear documentation of problems + solutions

- Strong project ownership and workflow breakdown

- Planning-driven development instead of chaotic development

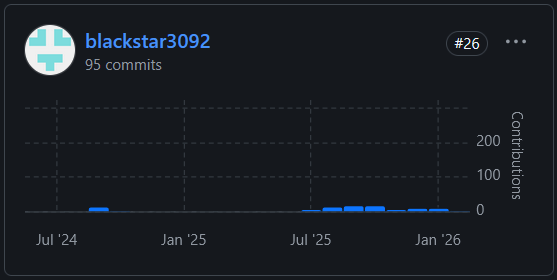

Commit Activity

Total Commits This Year: 613 This reflects consistent contributions across Spring and GitHub Pages throughout the trimester.

What this shows

- Strong sustained participation over time

- Continuous iteration and consistent involvement in both backend and frontend layers

- Willingness to refine and improve features after initial implementation

Pull Requests

My pull requests were a key part of my role as scrum master of my groups. It helped me translate feature ownership from idea → implementation → merge. I also improved my collaboration with my group by reviewing workflow and ensuring that all team members felt represented in PRs. My PRs were cleanly integrated and consistent contributions to main branch.

Instructional Framework Development

I also created structured frameworks for teaching FRQs and related concepts. This helped us organize and conduct 4 FRQ lessons so far, and it has helped students engage with lessons while their peers present. It also enabled everyone in the class to create lessons, thereby strengthening their own understanding of their FRQ.

Project Contribution Overview

This trimester, I worked primarily on the group management and AI-based team formation system. The goal was not just to create groups, but to:

- Build a structured UI for managing groups (create, edit, filter, delete, add/remove members)

- Connect that UI to both Spring (groups + people) and Flask (persona + scoring logic)

- Extend the AI grouping endpoint so prior feedback can influence team formation

The most significant technical change was modifying the /api/persona/form-groups endpoint so that prior experiences (ratings + persona mix) affect group scoring.

1. Groups Management System (Frontend + Spring Integration)

The Groups Management page handles:

- Filtering by period and class

- Searching groups

- Creating and deleting groups

- Adding/removing members with multi-select search

- Admin-restricted actions

- Smart Group Formation modal

State Management

The page maintains internal state to avoid unnecessary refetches.

Key variables:

let allGroups = [];

let peopleCache = []; // Cache people to avoid repeated API calls

let activePeriods = [];

let activeClasses = [];

let loadingCount = 0; // Track concurrent loading operations

let isAdmin = false; // Track if current user is an admin

let selectedUsersForGroup = {}; // Track multi-select members per group

let collapsedGroups = {}; // Track collapsed state per group ID

// Smart group state

let generatedGroups = []; // Store generated groups before saving

Instead of refetching all groups after every mutation, I update local state directly:

// After removing a member: mutate local state instead of refetching

const group = allGroups.find(g => g.id === groupId);

if (group && group.members) {

group.members = group.members.filter(m => m.id !== personId);

}

renderGroups();

// After deleting a group: remove from allGroups locally

allGroups = allGroups.filter(g => g.id !== groupId);

renderGroups();

// After creating a group: push to local state

newGroup.members = [];

allGroups.push(newGroup);

renderGroups();

// After adding members: append only if not already present

if (!group.members) group.members = [];

successes.forEach(person => {

if (!group.members.find(m => m.id === person.id)) {

group.members.push({ id: person.id, name: person.name, uid: person.uid });

}

});

renderGroups();

This reduces API load and keeps the UI responsive.

2. Baseline Smart Group Formation (Pre-Feedback)

Originally, group formation was handled entirely by Flask through:

POST /api/persona/form-groups

Original Algorithm Structure

- Shuffle user list

- Partition into groups of

group_size - Collect

UserPersonaobjects per group - Calculate team score using:

score = UserPersona.calculate_team_score(group_personas_list) if group_personas_list else 0.0

Scoring Logic (High-Level)

- 40% student persona diversity

- 60% achievement persona similarity

The endpoint iterates multiple random groupings and selects the one with the highest average score.

import random

best_grouping = None

best_avg_score = 0

iterations = 50

for _ in range(iterations):

shuffled = user_uids.copy()

random.shuffle(shuffled)

groups = []

remaining = shuffled.copy()

while len(remaining) >= group_size:

group_uids = remaining[:group_size]

group_users = [uid_to_user[uid] for uid in group_uids]

group_personas_list = []

for user in group_users:

personas = UserPersona.query.filter_by(user_id=user.id).all()

if personas:

group_personas_list.append(personas)

score = UserPersona.calculate_team_score(group_personas_list) if group_personas_list else 0.0

groups.append({'user_uids': group_uids, 'team_score': score})

remaining = remaining[group_size:]

# leftovers

if remaining:

group_users = [uid_to_user[uid] for uid in remaining]

group_personas_list = []

for user in group_users:

personas = UserPersona.query.filter_by(user_id=user.id).all()

if personas:

group_personas_list.append(personas)

score = UserPersona.calculate_team_score(group_personas_list) if group_personas_list else 0.0

groups.append({'user_uids': remaining, 'team_score': score})

avg_score = sum(g['team_score'] for g in groups) / len(groups)

if avg_score > best_avg_score:

best_avg_score = avg_score

best_grouping = groups

Response format:

{

"groups": [...],

"average_score": 75.0,

"method": "ai"

}

3. Adding Feedback-Based Adaptation

I extended the system so users can optionally incorporate prior experiences when forming groups.

Frontend Changes

Inside the Smart Group modal, I added:

- A toggle: “Would you like to incorporate your prior experiences?”

- A structured form:

- Previous group size

- Student rating (1–5)

- Teacher rating (1–5)

- Personas present in that group

If enabled, the frontend sends:

{

"user_uids": [...],

"group_size": 4,

"incorporate_prior_experiences": true,

"feedback_rows": [...]

}

function collectFeedbackRowsForTraining() {

const toggleOn = document.getElementById("usePriorExpToggle")?.checked;

if (!toggleOn) return [];

const stored = (typeof window.getAllGroupFeedback === "function")

? window.getAllGroupFeedback()

: [];

const normalizedStored = (stored || []).map(r => ({

ts: r.ts,

source: "stored_group_feedback",

personas: r.personas || null, // optional until you store personas

student_rating_1to5: r.student_rating_1to5,

teacher_rating_1to5: r.teacher_rating_1to5,

note: r.note

}));

// Inline prior experience (manual persona selection)

const prevSize = parseInt(document.getElementById("priorGroupSize")?.value || "0", 10);

const studentRating = parseInt(document.getElementById("priorStudentRating")?.value || "0", 10);

const teacherRating = parseInt(document.getElementById("priorTeacherRating")?.value || "0", 10);

const note = (document.getElementById("priorNote")?.value || "").trim();

const personas = [...document.querySelectorAll(".prior-persona-checkbox:checked")]

.map(cb => cb.value);

// Inline row only if user filled it

const inlineRow = personas.length ? {

ts: Date.now(),

source: "inline_prior_experience",

prev_group_size: prevSize,

personas,

student_rating_1to5: studentRating,

teacher_rating_1to5: teacherRating,

note

} : null;

return inlineRow ? [...normalizedStored, inlineRow] : normalizedStored;

}

4. Backend Update: Feedback → Score Adjustment

In Flask, I modified _FormGroups to:

- Parse

feedback_rows - Convert them into persona-pair score adjustments

- Apply those adjustments to team scoring

Core Idea

If a previous persona mix received high ratings, persona pairs in that mix receive a positive score delta.

If ratings were low, those persona pairs receive a negative delta.

Feedback Processing

def _safe_int(v, default):

try:

return int(v)

except Exception:

return default

def _normalize_feedback_rows(rows):

if not isinstance(rows, list):

return []

cleaned = []

for r in rows:

if not isinstance(r, dict):

continue

personas = r.get("personas")

if not isinstance(personas, list) or len(personas) < 2:

continue

persona_aliases = []

for p in personas:

if isinstance(p, str):

persona_aliases.append(p.strip())

elif isinstance(p, dict) and "alias" in p:

persona_aliases.append(str(p["alias"]).strip())

persona_aliases = [a for a in persona_aliases if a]

if len(persona_aliases) < 2:

continue

s = _safe_int(r.get("student_rating_1to5"), 3)

t = _safe_int(r.get("teacher_rating_1to5"), 3)

if not (1 <= s <= 5 and 1 <= t <= 5):

continue

cleaned.append({

"personas": persona_aliases,

"student_rating_1to5": s,

"teacher_rating_1to5": t,

})

return cleaned

def _feedback_to_pair_delta(feedback_rows, alpha=2.0):

from collections import defaultdict

pair_delta = defaultdict(float)

rows = _normalize_feedback_rows(feedback_rows)

for r in rows:

personas = r["personas"]

avg = (float(r["student_rating_1to5"]) + float(r["teacher_rating_1to5"])) / 2.0

centered = avg - 3.0 # -2..+2

delta = centered * alpha # scale strength of influence

for i in range(len(personas)):

for j in range(i + 1, len(personas)):

p1, p2 = sorted([personas[i], personas[j]])

pair_delta[(p1, p2)] += delta

return dict(pair_delta)

Adjusted Scoring

def _clamp(x, lo, hi):

return max(lo, min(hi, x))

def _team_feedback_adjustment(persona_aliases, pair_delta, max_bonus=15.0):

if not persona_aliases or len(persona_aliases) < 2 or not pair_delta:

return 0.0

total = 0.0

for i in range(len(persona_aliases)):

for j in range(i + 1, len(persona_aliases)):

p1, p2 = sorted([persona_aliases[i], persona_aliases[j]])

total += float(pair_delta.get((p1, p2), 0.0))

return _clamp(total, -max_bonus, max_bonus)

Final score:

def _calculate_team_score_with_feedback(group_users, pair_delta):

group_personas_list = []

for user in group_users:

personas = UserPersona.query.filter_by(user_id=user.id).all()

if personas:

group_personas_list.append(personas)

base = UserPersona.calculate_team_score(group_personas_list) if group_personas_list else 0.0

# Use primary student persona alias per user (indy/salem/phoenix/cody)

student_aliases = []

for user in group_users:

a = _extract_primary_student_alias(user.id)

if a:

student_aliases.append(a)

fb = _team_feedback_adjustment(student_aliases, pair_delta, max_bonus=15.0)

return round(_clamp(base + fb, 0.0, 100.0), 2)

To prevent runaway bias, adjustments are bounded:

def _clamp(x, lo, hi):

return max(lo, min(hi, x))

5. Endpoint Response After Update

Without feedback:

{

"average_score": 75.0,

"method": "ai",

"feedback_used": false

}

With feedback:

{

"average_score": 82.5,

"method": "ai_feedback",

"feedback_used": true,

"learned_pairs": 8

}

return {

"groups": best_grouping,

"average_score": round(best_avg_score, 2),

"method": "ai_feedback" if incorporate and pair_delta else "ai",

"feedback_used": bool(pair_delta),

"learned_pairs": len(pair_delta)

}, 200

6. Technical Challenges: Feedback Validation

Feedback rows must be validated to ensure:

- Personas are present

- Ratings are within 1–5

- Malformed rows are ignored

feedback_rows = body.get("feedback_rows", [])

if incorporate_prior_experiences:

feedback_rows = _normalize_feedback_rows(feedback_rows)

7. Current System Capabilities

The grouping system now:

- Forms teams using persona compatibility scoring

- Optionally incorporates structured prior experiences

- Learns persona-pair adjustments dynamically

- Returns metadata indicating whether feedback influenced scoring

The system does not persist learned weights across sessions yet, but it supports adaptive grouping within a single request cycle.

8. Summary

This trimester, I:

- Built the full group management interface (filters, search, add/remove, admin controls)

- Integrated Spring groups with Flask persona scoring

- Extended

/api/persona/form-groupsto incorporate feedback into scoring - Verified score shifts with and without feedback

- Structured the UI to support controlled prior-experience input

The result is a grouping system that can adapt to prior outcomes while maintaining its original compatibility model.

Night at the Museum — User Testing Feedback (Glows & Grows)

Overall Experience

Night at the Museum was a useful stress test for our platform because it put real users in front of the group management and persona-based team formation workflow. The feedback was mixed in a helpful way: people understood the concept quickly and saw why it matters, but they also pointed out UI polish issues, interactivity gaps, and reliability problems (especially around the team former).

What stood out to me is that most comments were not about the idea being confusing — they were about execution details: visual design, clearer flows, and making features feel more “complete.”

Glows (What worked)

A lot of users felt the project is practical and classroom-relevant:

- “Very purposeful and will improve the future of computer science!”

- “Really helpful for CSSE and CSP students!”

- “Supports agile scrum and solves a real problem I have seen in classes”

On the UI side, multiple comments suggest the current layout/navigation is understandable:

- “Good ui!!”

- “Ui is clear and good”

- “The UI is easy to learn from, nothing is confusing”

And the persona/team concept landed well:

- “The concept of creating your persona with these choices is cool and the database stuff is cool”

- “Your AI powered model works really well”

That’s important because the core workflow I built (persona → scoring → grouping) is only valuable if people get it quickly when they first open it.

Grows (What needs improvement)

1) UI polish and visual design

There were repeated requests to make the UI more visually engaging:

- “Add more colors!”

- “Maybe a bit more colorful”

- “UI could be better”

Even though the page structure is functional (filters, modals, forms, preview panels), users are basically saying the design still feels like a prototype.

2) Interactivity / engagement

Some feedback wasn’t about correctness — it was about the product feeling too static:

- “Lot of grows seems like the games are not interactive the ui is not that interactive at all and some of the games are more like instructions than games I did not get any dopamine boosts”

This suggests we need more interactive “feedback loops” in the UI (micro-interactions, responsive elements, clearer progress cues), not just more features.

3) Group formation usability (clarity of workflow)

Even when the feature exists, the user flow has to be obvious:

- “When creating groups, it was a little civility how to add the people who were a good fit”

This matches what I’ve seen in the groups page: the system has powerful operations (search people, multi-select, add selected, collapse/expand member details), but the UI needs stronger guidance so the “right path” is clearer the first time.

4) Reliability issues / broken features

One comment was a direct reliability problem:

- “Some of the features are currently broken including the team former.”

This is especially important because the team former is the main “wow” feature. If it’s down, users can’t evaluate the actual model behavior, even if the backend logic is strong.

5) Model depth / persona coverage

A few comments were about the AI system being too limited:

- “Ai model should be more comprehensive”

- “Could be better”

- “Maybe consider more personas? Let them enter what THEY think they are”

This aligns with the work I started: extending group formation so it can incorporate prior experiences (feedback rows + ratings). The next logical step is expanding persona coverage and adding a self-identification option so users don’t feel boxed into fixed labels.

Key Takeaways (What I’m doing next based on this)

- UI: Improve visual identity and polish (colors, spacing, interactive cues) since multiple people asked for this directly.

- Interactivity: Add more responsive elements so the platform feels less like a set of instructions and more like an interactive tool.

- Group formation flow: Make the “how to form good groups” path clearer, especially around selecting members and understanding why a group is recommended.

- Stability: Prioritize fixing broken features, especially anything that blocks team formation demos.

- Model/personas: Expand persona options and support user self-identification, since people explicitly asked for that and it improves data quality for grouping.